In Umich ROB550 class (Armlab), I worked as part of a team to develop software for controlling a ReactorX 200 robotic arm, a 5-degree-of-freedom manipulator powered by Dynamixel servo motors. Similar to Botlab, the lab is structured as a series of challenges that lead up to a final competition, where teams progressively develop perception, kinematics, and motion planning capabilities for autonomous manipulation.

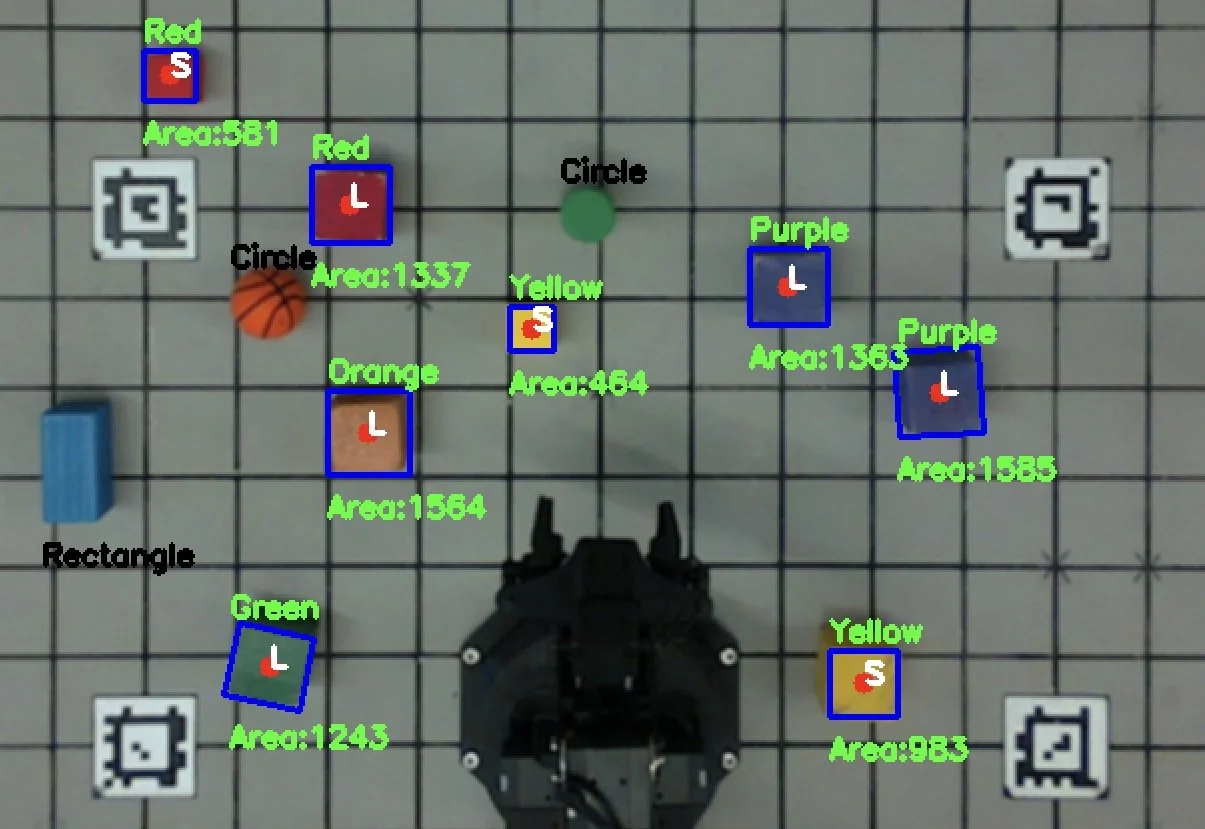

The system used an RGB-D camera and computer vision techniques for color and object identification, allowing the robot to detect and localize blocks within its workspace. We implemented forward and inverse kinematics along with trajectory planning to generate reliable pick-and-place motions for stacking blocks.

Throughout the project, we iteratively refined the robot’s motion paths and perception pipeline to improve accuracy and consistency. By the end of the lab, our system was able to stack a maximum of 15 blocks, demonstrating robust object detection and manipulation. Although we unfortunately did not capture a video of the final full stack, the photos and videos included show the process of refining the robot’s path movements as well as developing the camera-based color and object identification used to detect and pick up the blocks and perform color sorting.

Class website located here: https://rob550-docs.github.io/